5-Minute DevOps: Solving the CD Talent Problem

In 2014, our warehouse management system was a nightmare of complexity. A 25MLOC monstrosity that was so tightly coupled that all 900ish applications had to be deployed at the same time, and each release meant a week in a 24x7 war room and many sleepless nights after that. it was a major business problem that needed to be solved.

Four years later, Accelerate came out and asserted:

“Continuous delivery improves both delivery performance and quality, and also helps improve culture and reduce burnout and deployment pain.”

– Accelerate by Nicole Forsgren Ph.D., Jez Humble & Gene Kim

I didn’t need the book to tell me that — it had already changed my life. It'd been a few years since our first CD pilot in Walmart Logistics, and I knew firsthand it was a much better way to live. Since then, I’ve worked to help many teams learn the engineering and teamwork required for CD because I believe every team should experience what we did during that pilot.

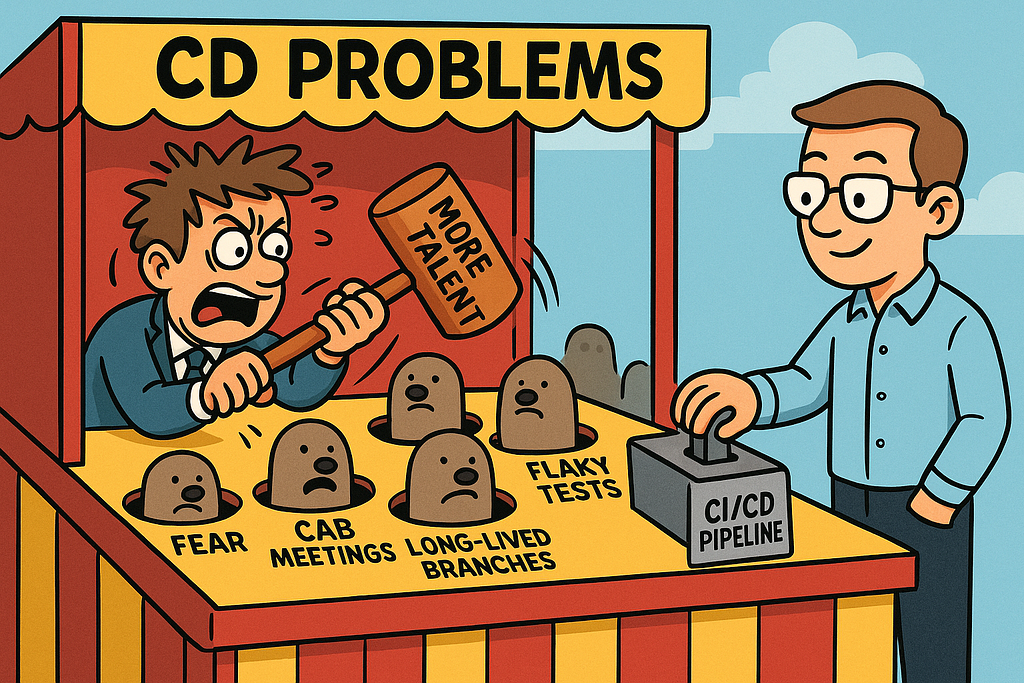

I’ve heard many excuses for “why CD won’t work here.” I’ve been told that their teams aren’t mature enough for CD, that their testing isn’t good enough, or that their teams lack the engineering talent.

“We’ll try it once they get good enough.”

Fail!

You don’t learn to ride a bike by waiting until you’re good enough. You remove the training wheels and find relatively safe places to fall.

If you want better engineering and better business outcomes, use your daily work to improve how you do daily work. Solve the problem of “why can't we CD?”

Embracing the Suck

When we began our journey, our teams were organized into feature teams with no domain boundaries. Each picked up whatever story was next in the backlog, regardless of where it lived in the system. The result was a codebase no one truly owned and everyone could break.

We were trying to manage this chaos with the Scaled Agile Framework (SAFe). It gave us structure, but also friction. Every feature required coordination across dozens of teams and languages, all coupled through a single shared database. Our lead time for change — the time from business request to production — was roughly a year.

Releases were massive, high-risk events. Deploying slightly less than once a quarter felt like a victory, even though each one hours of downtime at each distribution center, a week of 24x7 support in the office for each pilot, and weeks of recovery work afterward.

We weren’t just suffering from technical debt; we were drowning in organizational debt. Everyone knew the system was unsustainable, but no one could see a path out of it. Something had to change.

The Moonshot Challenge

Our saving grace was leadership. Our SVP, Randy Salley, and Senior Director, Kim Bowen, were outward-looking leaders who cared deeply about both results and people. Randy had been reading Team of Teams and The Phoenix Project and decided we needed to try something new. He gathered the senior engineers and said:

“I need you to figure out how we can deliver every two weeks.”

Challenge accepted — except we decided daily was a better goal. Go big or go home.

I’ve written elsewhere (“Agile Rehab”) about the strategic steps we took to shift from project to product teams, so I won’t repeat that here. Enabling CD was one thing; executing it was another. We had so much to learn

“You keep talking theory…”

From our ivory tower, we began preaching: 90% test coverage! Test-driven development! Continuous integration!

Teams dutifully wrote tests—and we learned not to treat test coverage as a goal.

You know what’s more terrifying than no tests? Tests that cover hundreds of lines of code with:

assertTrue(true);

During my 1-on-1 with my manager, he challenged me about our lack of progress. “You all keep talking theory. How will we execute?”

I responded, “Leadership pulled all of the tech leads off of the teams into a centralized design team. Now, instead of direct responsibility for outcomes, we only have influence and no visibility into the real challenges. Put me back on a development team!”

So, they put four of us back as tech leads on the first four pilot teams.

Walking the Talk

You don’t know what you don’t know until you try it. I got to learn firsthand how to apply what we thought we knew by facing each problem as it came and staying one chapter ahead of the team.

The team wasn’t hand-picked. We were average: 13 developers, a business analyst, a scrum master, and a product owner from the business side. Developers ranged from me (19 years of WMS experience) to new college grads. Most had little domain knowledge, having worked in feature teams for a decade, responsible for turning specs into code. They had no exposure to real business problems. Now they owned a critical business process and were learning on the fly.

Later, that became an important data point: if an average team could become high-performing just by changing how they worked, excuses about “talent” collapse.

Why Can’t We CI?

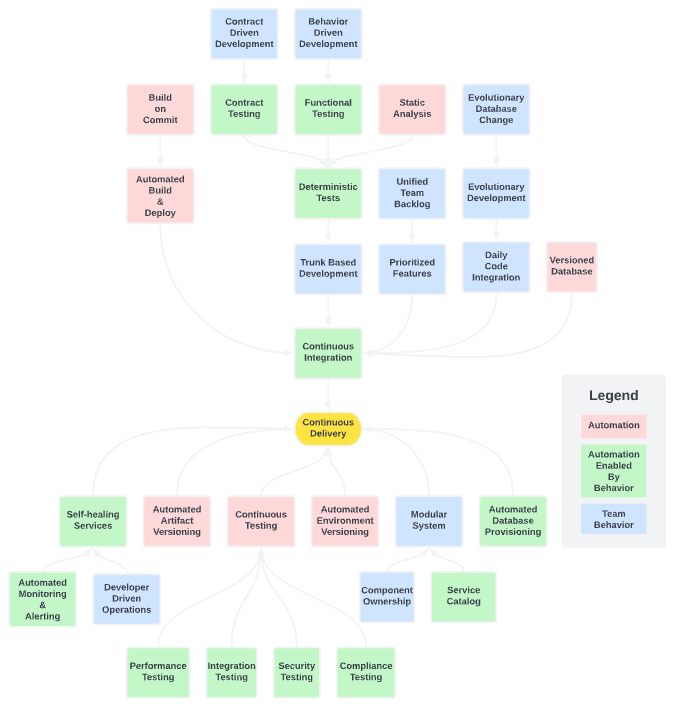

CD is a big elephant to carve up, so we needed some smaller bites. We’d been pushing teams on CI for months, with no traction. Using the CD Maturity Model from Continuous Delivery, I created a dependency diagram of behaviors and platform capabilities that we needed.

This gave me a reasonably solid roadmap of what to tackle. We’d created a small platform team to create templates for our core tech stacks to reduce the cognitive load of every team learning Jenkins, so my focus was on the blue and green boxes.

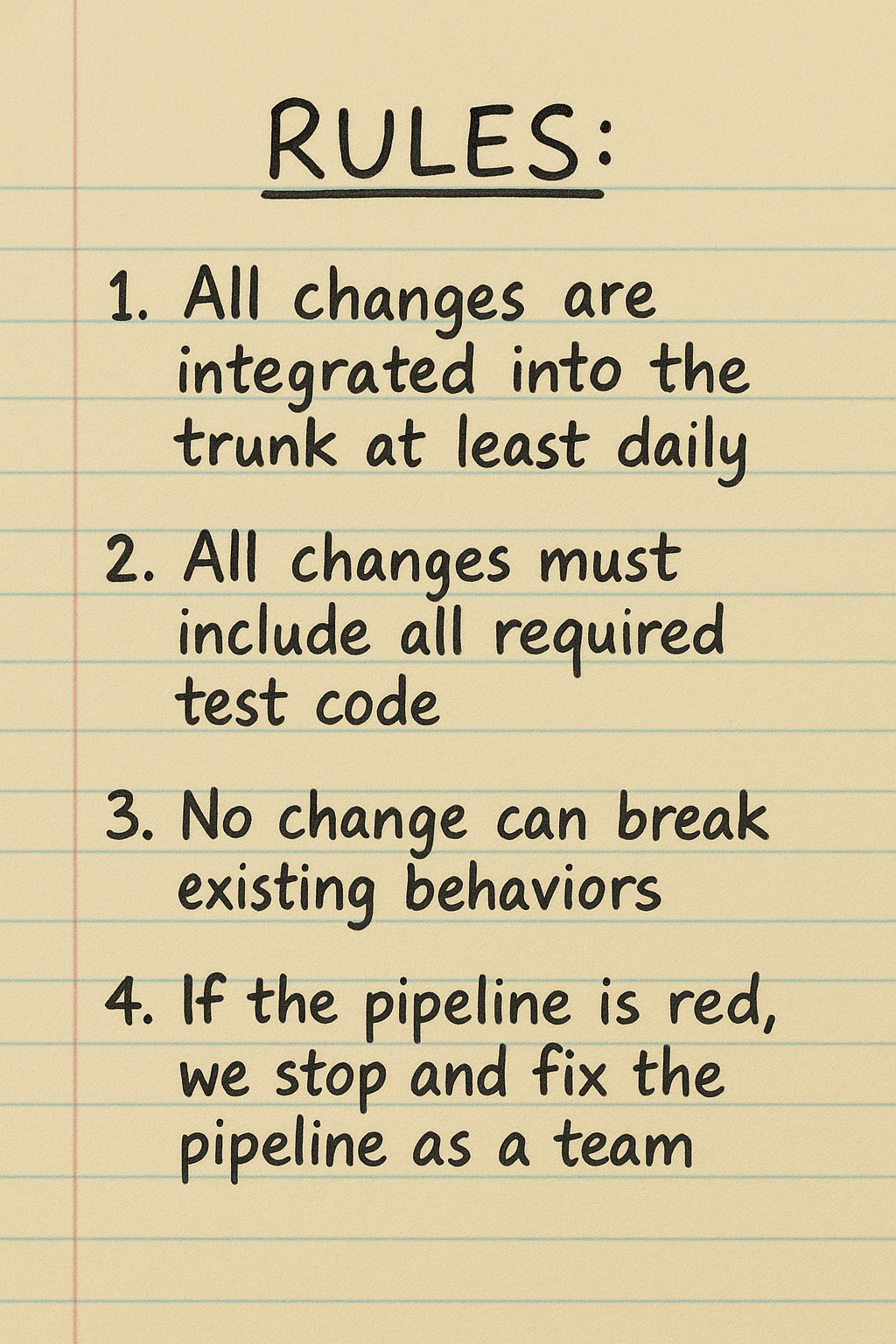

First, we needed to solve CI before moving on to CD. To do that, we needed to define CI clearly. So, I wrote the rules on a piece of paper and hung it up in the area.

Then we used that as our North Star:

“What’s stopping us from doing that today?”

So Many problems

To resolve the CI issue, we needed to address some issues.

- Testing Knowledge: We needed to make testing a leading activity performed before and during development, rather than a lagging activity done by our (soon-to-be-obsolete) testing team.

- Business Knowledge: We needed to spread business knowledge to make the testing effective and to take some of the load off me to explain the reasons for every change.

- Process Overhead: People were SAFe trained and used to long-lived branches, delivering complete stories, and managing to story points. The level of SAFe process was an impediment.

- Me: I also didn’t know how to test or how to deliver partially complete changes continuously. I had a copy of Continuous Delivery, Google, and a goal: daily production delivery.

The SAFe overhead was a real problem. We had a drive-by scrum master in the beginning who was there to make sure we followed the SAFe process. Step one on our journey was when I told the team, “From now on, we are using minimal viable process. We will start with zero process and only add things that help us reach our North Star.” The scrum master didn’t like it. I didn’t care.

The testing problem was the most critical. To meet our goal, we needed to be able to commit a change and have the pipeline certify that it was production-deployable. Everything about how we tested had to change. The problem was that we were playing the same game most teams play: get a story from the PO with some vague acceptance criteria, code it, and ask the PO, “Is this the rock you meant?” Coding tests in that environment feels like a huge waste of time. Also, we didn’t have enough testing knowledge on the team for everyone to write effective tests.

The solution came from the book: behavior-driven development (BDD).

Given I don’t know what we’re building…

BDD was the key that unlocked many other capabilities. I’d consider it foundational to all the improvements that followed.

BDD aligns technical work with business outcomes using structured “Given-When-Then” scenarios to guide development. It ensures a shared understanding between developers, testers, and business people and opens the door to automated acceptance tests using acceptance test-driven development (ATDD).

Using BDD enabled us to achieve a shared understanding of the business process for each feature.

- It helped the team build testing skills as we went through positive and negative scenarios.

- It provided natural points to slice work up since each small scenario is a deliverable behavior. In fact, our definition of “ready to start” was that anyone could pick up any test scenario and that the team could complete the story in 2 days or less.

- Everything became 1 story point, and we eliminated the face-palmingly, silly process of debating story points with cards.

So now we had thin slices of work that the team could swarm with test scenarios they defined. Automating those became an implementation detail instead of making tests up on the fly.

To improve this further, we’d define the behavior changes of each of our several services using the same process. We’d also define any contract changes between services as tests, so multiple people could work on the same task across different services without worrying about integration issues.

Steadily, the team improved their testing muscles and soon surpassed the skills of the testing team assigned to us. In fact, they became an impediment. I had them removed from my team.

Designing Tests for the Pipeline

CD requires a specific design from a test suite. It needs to be very fast, 100% deterministic, and comprehensive. In addition, we had an architectural rule we had to follow: any service can deploy independently of any other service and in any sequence. This not only enables extreme agility, but also makes every change, including emergency changes, less risky.

While the testing team's default was to build an E2E regression suite, that wouldn’t work for CD. Instead, we had layers of tests: some unit tests, more sociable unit tests, contract tests, tests for contract mocks, etc. All of these were stateless. Any flake was terminated with extreme prejudice. Since everything we did was aligned with domain-driven design, none of our services mutated information from any other service. We knew that if our contracts were solid, the components would integrate together correctly.

All of these improvements enabled us to meet and exceed our first goal, daily integration to the trunk.

Why Can’t We CD?

Months of improvement paid off. I built a Grafana dashboard to display integration frequency trends to the team, and we were ripping through work. But we couldn’t yet deploy to production — partly because we were waiting on our new database, mostly because of fear.

The business didn’t trust us. Stability had been a chronic issue for years, and now we were doing something radically different that they didn’t understand.

It took months of patient persuasion, demos, and evidence. Finally, they let us deploy our new, low-risk screen. After that success, I assumed permission to continue — and we did, every week at first due to the process overhead of change control and CAB.

Later, when our platform team automated change control, we deployed multiple times per day. My dashboard had us averaging five deploys per day and frequently exceeding that.

It Changed Us

We deployed updates, alerted beta users in Slack, and got feedback instantly. If users spotted an issue, we hardened tests, fixed it, and redeployed. If they had an idea, we could design, code, and ship it in under two days.

A few months after I left the team, I went back and saw they were tracking their development cycle time (from start to delivery) on their whiteboard. In numbers about a foot tall, it said,

“0.8 days.”

The team was excited to do demos. They had ideas to improve the application. They had pride and the highest morale I’d ever experienced on a development team. They were good and they knew it. They weren’t 10X developers. They were a 10x team.

When our teams are good enough…

Teams’ inability to execute CD has nothing to do with talent. Any team, given clarity, safety, and purpose, can learn CD and improve its engineering in the process.

The gap isn’t people — it’s leadership.

None of this could have happened without the support and trust of our SVP and Sr. Director.

You don’t need better talent.

You already have the talent.

Give them hard problems to solve, the support to solve them, and set them free.