You Automated Your Old Process

“Those who cannot remember the past are condemned to repeat it.” - George Santayana

In 1964, Black and Decker became the first company to run Material Requirements Planning on a computer. MRP is the calculation that tells a manufacturer what to build, what to order, and when. Before software made it fast, manufacturers ran this calculation once a month. Not because monthly was right. Because monthly was all they could afford. The calculation was so laborious that running it more often wasn’t economically viable.

When MRP made the computation trivial, the transformative adopters asked the right question: if the constraint on frequency is gone, what’s the right frequency? They moved to weekly replanning, then daily. Tighter cycles exposed demand signals they’d been missing, reduced inventory waste, and cut lead times. They got the value that was actually on the table.

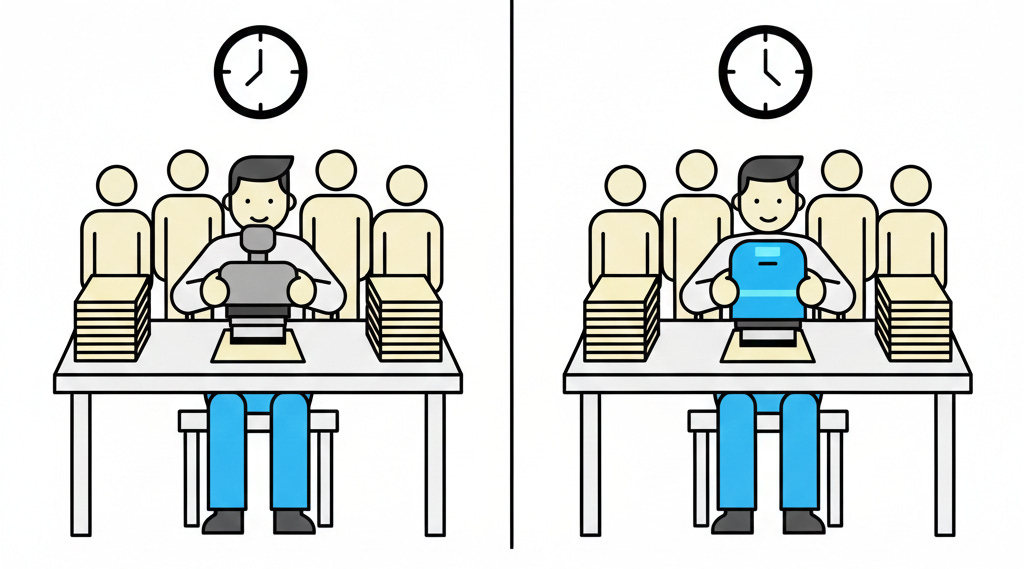

Most manufacturers didn’t ask that question. They automated their monthly cycle. They replaced clerks with computers, produced the same reports faster, declared the project a success, and kept doing exactly what they’d been doing at exactly the same cadence. Eliyahu Goldratt, the physicist and management theorist who wrote “The Goal” and developed the Theory of Constraints, documented the pattern: companies had bought a platform that should have transformed their planning horizon and mostly used it to do their old process more efficiently. They bore the full cost of adoption and captured a fraction of the available value.

That was sixty years ago. We are still making the same mistake, in every platform generation, on a predictable schedule.

The CD Pipeline That Changed Nothing

In the mid-2010s, continuous delivery platforms made automated deployment pipelines accessible to teams that had previously been deploying through months-long release cycles. I watched teams with quarterly release calendars adopt Jenkins, then CircleCI, then GitHub Actions, and keep their quarterly release calendar.

The pipeline was real. The tests ran, the artifacts got built, and then the code sat waiting for the deployment window, the CAB approval, the coordination call with five other teams, and the rollback runbook review. The constraint was never the mechanics of deploying. It was the batch size, the approval theater, and the coordination overhead built up over years to manage the risk of infrequent, large releases. Automating the build didn’t touch any of that. It encoded it.

There’s a version of this failure that’s harder to see because it looks like progress. Some teams went further than automating the release ceremony: they added unit tests, static analysis, and a deployment to staging. They called it a CD pipeline and weren’t wrong about what they built. They were wrong about what it was for. Automating the build isn’t continuous delivery. The question CD forces is whether you can automate enough confidence that human approval is no longer the constraint. That means functional tests, contract tests, security scans, performance baselines, and deployment verification that confirms the change works in production. The pipeline became the quality signal. The ones who stopped at unit tests just moved the manual gate a few steps to the right.

The teams that did the harder work unlocked something different: a single change in production within hours, user feedback before the next thing was built, blast radius small enough that rollback stopped being a project, and a cost per deployment that dropped to nearly zero. That last one changes the economics of every decision. When deploying costs almost nothing, the argument for batching changes disappears. The justification for the CAB disappears. The weekend release window disappears. The risk didn’t go away. It got distributed across dozens of small changes instead of concentrated in one monthly release.

Think of it as dollar cost averaging. Investing a fixed amount regularly smooths out the volatility of trying to time the market perfectly. Frequent small deployments do the same thing to release risk: each change is small enough that a problem is cheap to find and fix, and no single deployment is likely to carry enough weight to threaten the business if something goes wrong.

The Cloud That Didn’t Move

Cloud platforms promised on-demand infrastructure. Provision a server in minutes, not months. Elastic capacity. Pay for what you use. The economic model and the technical capability were genuinely transformative.

Most enterprises lifted their on-prem architecture into the cloud and preserved everything that made on-prem painful. The change management board still reviewed infrastructure requests. The provisioning ticket still went through a queue. The architecture still assumed servers were pets you named and kept alive for years. Teams still waited weeks for environments because the process assumed scarcity, even after the scarcity was gone.

They got the infrastructure bill without the agility. The cloud made on-demand provisioning trivial; they kept requesting it through a process designed for a world where it wasn’t. The constraint that justified the process was eliminated. The process wasn’t.

The organizations that rethought their processes alongside the migration got something else entirely: environments on demand in minutes rather than weeks, the ability to run dozens of experiments simultaneously without fighting over shared infrastructure, auto-scaling that absorbed traffic spikes without a 2 AM page, and the option to kill a bad deployment and spin up a replacement before most users noticed. They stopped treating infrastructure as a scarce resource to be rationed and started treating it as a utility to be consumed. That changes everything about how you design, test, and ship.

I’ve seen organizations spend years and millions migrating to cloud and come out the other side with longer deployment cycles than they started with. The platform made it possible to move fast. They brought their organizational immune system with them.

AI Is the Current Version of This Pattern

The pattern has appeared twice now, in CD and in cloud. The third iteration is running right now.

Right now, teams are using AI-assisted development. Some are generating code faster. The ones who are already moving well, with solid engineering discipline and good delivery practices, are seeing real acceleration. Everyone else is either struggling to get useful output or generating tech debt and defects at a pace that makes the throughput illusion obvious in retrospect.

And the code is reaching the same bottlenecks it always has: long-lived feature branches waiting for integration, manual testing gates, change approval boards that survived the move to cloud and are still very much alive, infrequent releases that batch up two months of work and require four days of regression testing to feel safe enough to ship.

The constraint moved downstream and got worse. More code, same bottleneck. The batch size is larger. The integration risk is higher. The regression suite is bigger. The approval board is busier.

The organizations that have restructured around AI are compressing work that used to take days into hours. A developer with a clear acceptance test, a well-scoped task, and a delivery pipeline enhanced with AI-enabled validations that catch defects at their source can hand off implementation to an agent, review the output against the test, and ship -- without the multi-day cycle of write, review, wait, revise, wait, merge, wait, deploy. They’re running more experiments, getting feedback faster, and learning at a rate that compounds. The platform isn’t making them faster at their old process. It’s enabling a fundamentally different cadence.

AI rewards organizations that have already solved their delivery structure. If you have trunk-based development, small batches, automated testing you trust, and deployment frequency measured in days or hours, AI-assisted development is a genuine accelerant. You are producing more of the right kind of work, and it flows through your system at the rate your system was built to support.

If you haven’t solved those things, AI is generating inventory that accumulates in front of the same bottlenecks that have always been there. The inventory is just building faster. So is the blast radius. So is the business risk. The constraint is more visible now, not less. Which is, in a grim way, useful diagnostic information if you’re willing to look at it.

This split isn’t random. It’s structural. I wrote about the mechanism in more detail here: AI Is a High-Pass Filter for Software Delivery.

The Pattern Is Not an Accident

Organizations don’t automate their old processes because they’re stupid. They do it because it’s the path of least resistance. The platform reduces the cost of doing the existing thing. The team captures that efficiency gain. Management sees the numbers improve. The project is declared a success. Nobody had to fight the organizational immune system to restructure the process. Nobody had to explain to a VP why a constraint that no longer exists still has a process built around it.

The transformative gains are in the restructuring. The restructuring requires admitting that your process was built around a constraint that no longer exists. That is not a comfortable conversation in most organizations. So it doesn’t happen. The platform arrives, the old process continues, slightly faster.

Goldratt’s critique of MRP adoption applies verbatim to CD, cloud, and AI: the tool exposed what was possible, and most organizations used it to automate what they were already doing.

The Diagnostic

One question. Apply it to your AI platform adoption right now.

When your team started using AI-assisted development, did your deployment frequency change? Did your change failure rate go down? If you can’t answer both, you haven’t removed the constraint. You’ve automated around it.

If the answer is no on both, or if you don’t know what either number is, you have automated your old process. You are bearing the full cost and complexity of AI adoption: the licensing, the tooling integration, the workflow changes, the security review, the training. And you are capturing a fraction of the available value because you did not ask what the platform makes possible that you couldn’t do before.

The question is not “how do we use AI to write code faster?” The question is: if generating code is no longer the constraint, what is, and how do we eliminate it?

Black and Decker figured that out in 1964. Most of their competitors didn’t.