Your Testers Aren’t Doing QA

I’ve had many arguments with testers who DevSplain to me that developers can’t test and that we must have testers inspecting work product to ensure it’s deliverable. I’ve directly measured how and why that fails and how it increases risk. It’s not an opinion. I have the data. The one thing I’ve not talked much about is WHY they are bad at their jobs.

Not all of them. Some testers are brilliant systems thinkers who make everyone around them better. But the ones DevSplaining to me about how developers can’t test? They are doing quality control, not quality assurance, and they don’t know the difference.

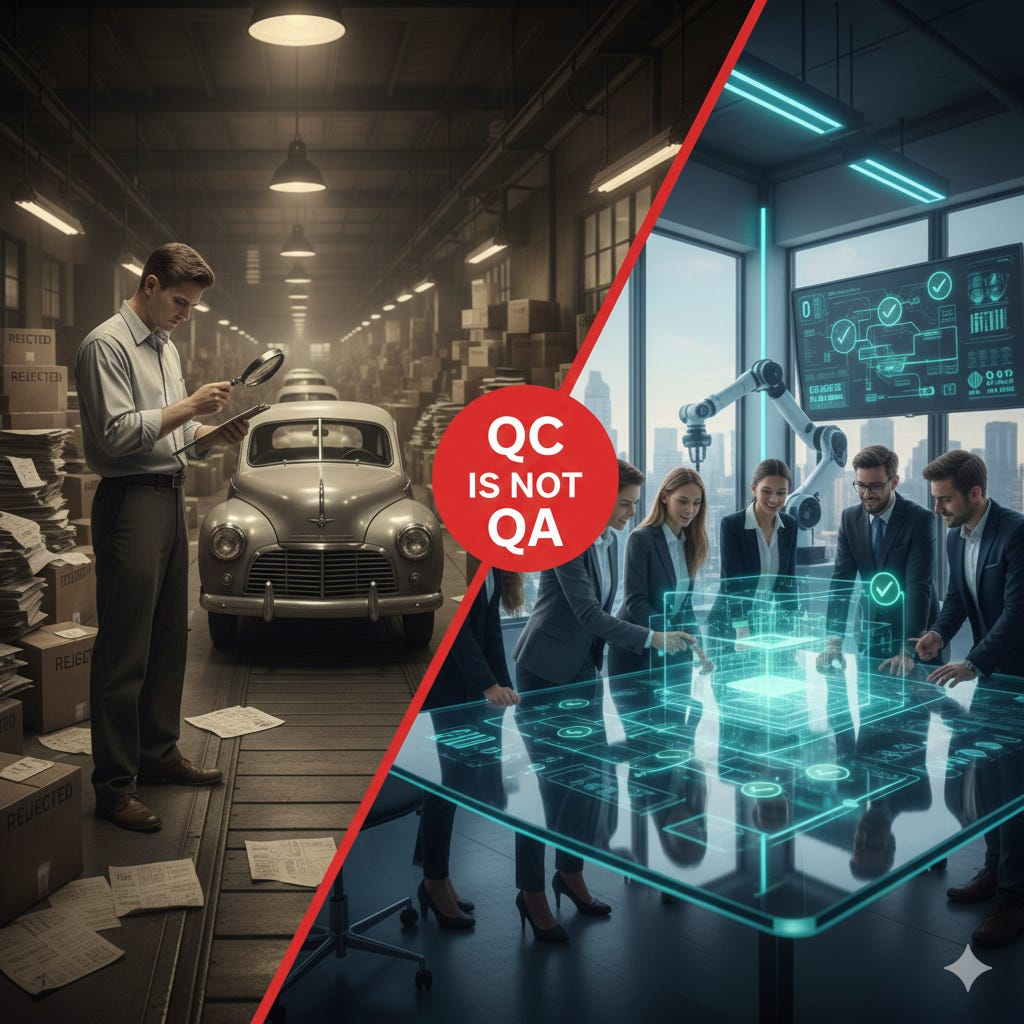

QC Is Not QA

They are checking outputs, not doing QA. They will claim that test automation is “only checking” and that they are the REAL testers because they “explore.” They don’t explore other than exploring what hasn’t been automated. That’s not exploration. That’s gap-filling. They are performing quality control -- inspecting outputs after the fact -- not quality assurance.

Here’s the difference. QC asks, “Does this output meet the specification?” QA asks, “Why did this defect get created in the first place, and how do we prevent this class of defects from ever happening again?” QC is Ford in 1958 averaging quality scores across cars rolling off the assembly line and shipping anything that hit the minimum. QA is Toyota stopping the line to fix the process that allowed the defect.

If you spend your days running manual test scripts against completed features and logging bugs in Jira, you are a quality control inspector. You are not assuring anything. You are reacting to defects that already exist, and you are doing it at the slowest, most expensive point in the delivery process. The code is written. The developer has moved on to the next task. The context is gone. The cost of repair just went up by an order of magnitude.

The System Creates Defects

The testers I argue with don’t exercise the systems thinking and cause analysis skills required to actually assure quality. They don’t look upstream. They don’t ask why defects are being created. They sit at the end of the line and catch what they can.

Real quality assurance requires recognizing that defects are created by the system within which the product is built.

When management assigns a sprint’s worth of work to each individual developer, they create a system that disincentivizes people from helping each other. If I’m loaded to capacity with my own stories, I’m not going to stop and pair with a teammate who’s stuck. I’m not going to do a thoughtful code review. I’m going to rubber-stamp the pull request and get back to my own pile. That creates defects.

When teams are distributed across time zones so widely that a simple question takes 12 hours to get a response, that

creates defects. A developer makes an assumption instead of waiting half a day for an answer. That assumption is often wrong.

Multiply that by every question across every developer across every sprint, and you have a defect factory. No amount of

manual testing at the end of the sprint fixes this. The defects are baked in by the organizational design.

When code review happens through asynchronous review comments instead of a real-time conversation, it creates defects. I’ve measured this repeatedly. A five-day development effort followed by a week of review ping-pong, with questions that would have taken 30 seconds to answer in person requiring hours of back-and-forth through a pull request. By the time the review is done, the original developer has lost context, the reviewer never had enough context, and the changes are too large for anyone to effectively evaluate. That’s not a quality process. That’s a quality theater matinee.

When teams are not working together to define the test plan before building the software and instead try to bolt on tests after the fact, it creates defects. When running tests isn’t an automatic process triggered by every code change, it creates defects. When the test environment is a shared sandbox that three teams are deploying to simultaneously, it creates defects. When the product owner is on another team with different priorities and takes four days to answer a clarification question, it creates defects.

None of these are testing problems. They are system design problems. And the testers who DevSplain to me about how critical their role is? They are oblivious to every single one of them.

## Prevention Over Detection

Defects are prevented when we organize to reduce the conditions that create them, automate those that can be

auto-repaired, and accelerate detection to provide information as close to the keyboard as possible.

We design test automation to provide feedback on defects. Not to stop a defect from being delivered, but to learn how that defect was created and design a change in our process to prevent that CLASS of defects. This is the critical distinction. A test that catches a bug is useful. A test that teaches you why the bug happened and leads to a process change that prevents the entire category of bugs? That’s quality assurance.

We ALWAYS use automation for this. We ALWAYS design it to RELIABLY detect as EARLY as possible. Not because we want a faster pipeline -- though we do -- but because we need fast enough feedback to identify HOW the defect occurred and improve our delivery system design. If a test catches a defect three days after the code was written, the developer has to context-switch back, re-read code they’ve already mentally filed away, and guess at what they were thinking. That’s not feedback. That’s archaeology.

When a test catches a defect within minutes of the change, the developer knows exactly what they changed, why they changed it, and what assumption was wrong. That’s actionable information. That’s the difference between “fix this bug” and “fix this system.”

I worked with a team that had a recurring class of integration defects. Every two weeks, something broke at the boundary between their service and an upstream dependency. The QC approach was to add more end-to-end tests. More tests, more coverage, more detection. The defects kept coming.

When we actually looked at the system, we found the root cause. The upstream team deployed on a different cadence, didn’t version their API contracts, and communicated breaking changes through a wiki page that nobody read. The fix wasn’t more tests. The fix was contract-driven development with automated contract verification in both teams’ pipelines. The class of defect disappeared. That’s QA.

CD Is a QA Process

THIS is how we must think about quality assurance. Test automation requires as much architectural thought as the

software we are building. That comes from people with end-to-end delivery-system design knowledge. That knowledge comes

from being responsible for the entire SDLC, not a specialist slice of it.

Continuous delivery has always been a QA process, not build and deploy automation. The entire point of CD is to create a system that provides continuous feedback about the health of your delivery process. Every pipeline run is an experiment. Every deployment is a data point. Every defect that penetrates to production is a signal that your system has a gap.

When you work to ramp up the frequency of delivering software, you find the cracks in the system. You find out that the product owner being on another team creates defects. You find out that your deployment process has a manual step that someone forgets at 2 am. You find out that your test data management is so fragile that tests fail randomly, and nobody trusts the pipeline anymore. You find out that your “QA phase” is actually a three-day wait that incentivizes developers to batch up changes, which increases risk, which causes more defects, which requires more QA, which adds more wait time.

CD makes all of this visible. It’s a forcing function for improvement. Not because the tools are magic, but because daily delivery to production exposes every weakness in your system design. You can’t hide behind a two-week sprint and a hardening phase when you’re shipping every day.

Take Quality Seriously Before Adding AI

If you are not using CD to deliver software and are not using the feedback from CD to continuously harden your software

delivery system design, and instead let HR design your quality process -- assigning testers to teams based on headcount

ratios, organizing by functional specialty, flexing developers between products to maximize utilization, measuring

individual output instead of team outcomes -- don’t add AI to the mix

until you take quality seriously.

AI amplifies whatever system it operates within. A well-designed delivery system with fast feedback loops, automated

quality gates, and teams organized around outcomes will get better with AI assistance. You can use AI to improve the

system instead of naively using it simply for coding task. A dysfunctional system with

manual testing phases, asynchronous handoffs, and testers who think their job is catching bugs will get worse. Faster.

AI will help your developers produce more code with more defects at a higher rate, and your QC inspectors will fall even

further behind.

You will crash and burn, or at best, spend money without improving your bottom line. Fix the system first. Build quality in. Stop inspecting it in after the fact.

That’s quality assurance.